signalspider is a rust-based web crawler that respects robots.txt and archives html. Its user-agent is currently signalspider . I don’t plan on archiving sites at scale, I just want to experiment with crawler bots and analyzing website link structures.

If there are any issues, contact me at theannovel@gmail.com

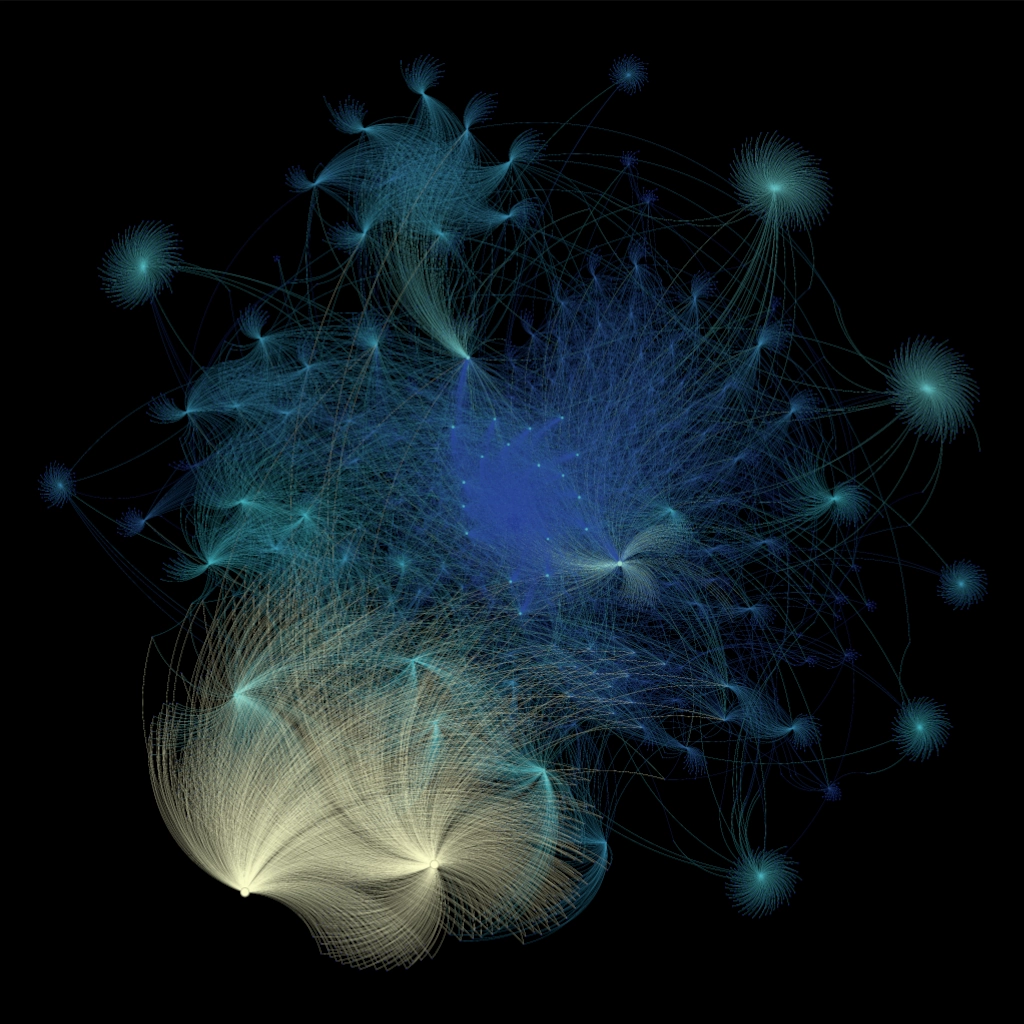

I let the bot roam the internet starting with the rust installation page, and about an hour later it discovered around 20000 links, which I mapped in this graph with Gephi:

I won’t include the high resolution URL map as there are quite a few personal github accounts and I’m not sure about publishing that information. In any case, I think the results are quite interesting. While the bot mostly explored the official rust blog, it did end up wandering off to Wikipedia and github as well.